As artificial intelligence continues to reshape how businesses operate, one phrase is gaining attention in professional circles: keeper ai standards test. Whether you encountered it while researching AI compliance, cyber security tools, or vendor verification, the term generally points to one core idea evaluating whether an AI system meets defined benchmarks for safety, accuracy, ethics, and performance.

But what exactly does that involve? Is it a certification? A compliance audit? A hiring exam? Or something else entirely?

Let’s break it down clearly and practically, without jargon or hype.

Understanding the Concept Behind the Keeper AI Standards Test

At its core, the keeper ai standards test refers to an evaluation process used to determine whether an AI system, model, or solution meets predetermined operational and ethical standards.

These standards usually cover areas like:

- Performance reliability

- Data security and encryption

- Bias detection and fairness

- Regulatory alignment

- Transparency and explainability

The purpose isn’t just technical validation it’s trust validation.

When AI systems influence financial decisions, hiring outcomes, cybersecurity alerts, or medical diagnostics, reliability becomes non-negotiable.

Why AI Standards Testing Is No Longer Optional

AI adoption has accelerated across industries. Businesses now use machine learning models for:

- Fraud detection

- Customer support automation

- Resume screening

- Predictive analytics

- Threat monitoring

Without structured evaluation, these systems can produce unintended consequences. Biased results, security vulnerabilities, or incorrect outputs can quickly escalate into financial loss or legal exposure.

I once reviewed an AI-driven analytics tool that looked flawless during demonstrations, but under stress testing, it showed significant inconsistencies in edge-case scenarios. That experience made it clear: performance claims mean little without structured validation.

Standards testing ensures that AI systems are tested not just for what they do well but for how they behave under pressure.

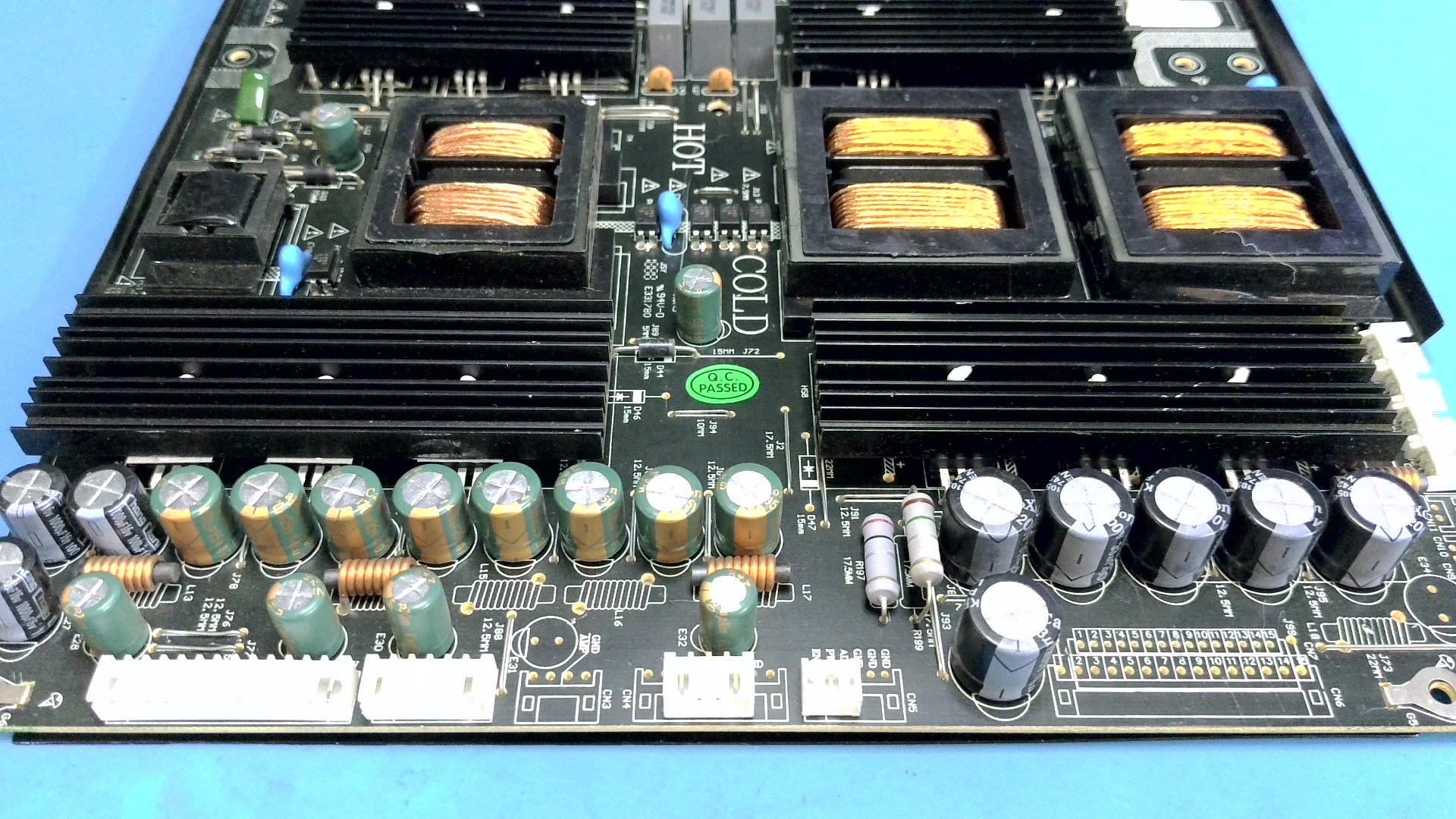

Performance Evaluation: AI in Security Infrastructure

Imagine a company deploying AI-based monitoring software to detect internal security threats. The system flags suspicious activity automatically.

Without proper standards testing:

- It may generate high false positives, overwhelming security teams.

- It could miss sophisticated threats due to training gaps.

- Sensitive data might be processed without adequate encryption.

With structured evaluation in place, the organization would test:

- Detection accuracy rates

- False positive ratios

- Data handling protocols

- Incident reporting reliability

The result? Fewer blind spots, stronger security posture, and reduced operational risk.

What Does a Keeper AI Evaluation Typically Measure?

Although different organizations may define their own criteria, AI standards assessments usually focus on four pillars.

Performance Benchmarks

- Accuracy consistency

- Model stability

- Latency and response time

- Error rate thresholds

Ethical & Fairness Checks

- Bias detection across demographic groups

- Decision transparency

- Explainability of outputs

Data Protection & Security

- Encryption practices

- Access controls

- Storage safeguards

- Compliance with data protection regulations

Operational Reliability

- System resilience under load

- Recovery mechanisms

- Continuous monitoring processes

Each category strengthens a different layer of AI reliability.

Comparison: AI Without Standards vs AI With Standards

Here’s a simplified comparison to illustrate why evaluation matters:

| Category | Without Structured Testing | With Standards Validation |

|---|---|---|

| Accuracy | Unpredictable | Benchmarked and monitored |

| Bias Risk | Often unnoticed | Identified and corrected |

| Security | Potential vulnerabilities | Controlled safeguards |

| Compliance | Legal uncertainty | Verified alignment |

| Trust Level | Questionable | Strong organizational confidence |

The difference isn’t incremental it’s foundational.

Is the Keeper AI Standards Test a Certification?

Sometimes. In certain contexts, it may refer to an internal or third-party validation program used by organizations to certify that AI tools meet specific operational criteria.

In other cases, it’s more broadly used to describe the general process of testing AI systems against predefined standards.

If you’re researching this term, consider the context:

- Vendor product validation

- Enterprise AI compliance audit

- Security software evaluation

- Professional skills assessment

The phrase can adapt depending on industry usage.

Growing Importance in Regulated Industries

Industries with strict regulatory oversight are especially focused on AI validation, including:

- Financial services

- Healthcare

- Government systems

- Cybersecurity operations

- Insurance and risk management

Organizations operating in these sectors cannot rely on “black box” models without validation. Transparent testing procedures provide accountability and reduce exposure.

In competitive markets, the companies that rigorously test their AI systems gain something powerful: confidence from customers, regulators, and stakeholders.

How Organizations Conduct AI Standards Testing

A typical evaluation process might include:

- Defining performance benchmarks

- Running controlled data simulations

- Performing bias audits

- Testing data security safeguards

- Documenting results

- Approving conditional deployment

- Establishing continuous monitoring

AI systems evolve over time, especially those that use adaptive learning. Ongoing review is essential to maintain alignment with standards.

Why This Matters for Professionals

For AI developers, compliance officers, or security teams, understanding AI standards testing isn’t optional anymore.

Professionals who understand:

- Model explainability

- Fairness metrics

- Security validation

- Risk assessment

are increasingly valuable in the modern workforce.

Organisations don’t just want innovation they want responsible innovation.

The Bigger Shift: From Innovation to Accountability

Early AI development focused heavily on capability what systems could do. Today, the conversation has shifted toward responsibility—how systems behave, how data is handled, and whether decisions can be justified.

The keeper ai standards test concept reflects that shift.

It signals that performance alone isn’t enough. AI must be:

- Transparent

- Measurable

- Secure

- Fair

- Reliable

Companies that embrace this mindset reduce long-term risk while strengthening credibility.

Related: What Is Considered Viral on Instagram? A Clear, Realistic Guide

Conclusion

The keeper ai standards test represents more than just an evaluation process it reflects a broader movement toward responsible AI deployment.

As artificial intelligence becomes embedded in decision-making systems across industries, structured validation ensures those systems are accurate, ethical, and secure.

Organisations that prioritise AI standards testing don’t just avoid risk they build trust. And in a world increasingly shaped by algorithms, trust is the most valuable asset of all.

FAQs

What is the keeper ai standards test?

It refers to an evaluation process used to measure whether AI systems meet predefined benchmarks for performance, ethics, and security.

Is it an official global certification?

Not necessarily. The term can describe internal testing programs, vendor evaluations, or structured compliance assessments.

Why is AI standards testing important?

It reduces bias, improves accuracy, strengthens data security, and ensures regulatory compliance.

Who should care about AI standards testing?

Organizations deploying AI tools, especially in regulated industries like finance, healthcare, and cybersecurity.

How often should AI systems be evaluated?

Regular audits are recommended, particularly after major updates or regulatory changes.